I want to migrate my Nextcloud instance from MariaDB over to PostgreSQL. I already have a PostgreSQL service running for Lemmy. And I’m pretty starved for RAM.

Would it be better to just have one PostgreSQL service running that serves both Nextcloud and Lemmy? Or should every service have its own PostgreSQL instance?

I’m pretty new to PostgreSQL. But in my mind I would tend towards one service to serve them all and let it figure out by itself how to split resources between everything. Especially when I think that in the long run I will probably migrate more services over to PostgreSQL (and upgrade the RAM).

But maybe I am overlooking something.

Edit: Thanks guys, I’ve settled for a single instance for now. And after a little tuning everything seems to be running better than ever, with room to spare.

If you’re the only user and just want it working without much fuss, use a single db instance and forget about it. Less to maintain leads to better maintenance, if performance isn’t more important.

It’s fairly straightforward to migrate a db to a new postgres instance, so you’re not shooting yourself in a future foot if you change your mind.

Use PGTune to get as much as you can out of it and adjust if circumstances change.

It’s fairly straightforward to migrate a db to a new postgres instance, so you’re not shooting yourself in a future foot if you change your mind.

That’s what I needed to hear. I’ll just try it out and see what works best for me. Stupid me didn’t even think of that.

I’m not really bothered about services going down all at once. The server is mostly just used by me and my family. We’re not losing money if it’s out for an hour or so.

Remember that databases were designed to host multiple databases for multiple users… As long as you’re working with maintained software (and you are) it should be pretty trivial to run on the latest version of Postgres and have everything just work using one instance if you’re resource constrained.

Definitely a good point about being able to migrate as well. Postgres has excellent tools for this sort of thing.

In a hobby it’s easy to get carried away into doing things according to “best practices” when it’s not really the point.

I’ve done a lot of redundant boilerplate stuff in my homelab, and I justify it by “learnding”. It’s mostly perfectionism I don’t have time and energy for anymore.

There are pros and cons to each…

There’s the question of isolation. One shared service going down brings down multiple applications. But it can be more resource efficient to have a single DB running. If you’re doing database maintenance it can also be easier to have one instance to tweak. But if you break something it impacts more things…

Generally speaking I lean towards one db per application. Often in separate containers. Isolation is more important for what I want.

I don’t think anyone would say you’re wrong for using a single shared instance though. It’s a more “traditional” way of treating databases actually (from when hardware was more expensive).

Native, docker, something else? How starved are you at the moment?

If I went with a shared service I would run it natively on Debian Stable.

Lemmy currently uses a dockerised PostgreSQL service. I’ve got 16 GB RAM which is currently mostly occupied by both MariaDB and the PostgreSQL Docker.

Your Postgres and/or MariaDB is probably configured to take as much RAM as it can get. It shouldn’t use that much with the light workloads you’re likely to have.

Have you tried limiting the RAM usage of those containers? They tend to use as much as you give them, which is all of it by default.

That was more or less the default of the PostgreSQL container and it ran like ass because I don’t have a SSD.

Basically I had to give MySQL a ton of RAM for Nextcloud and PostgreSQL for Lemmy. For now I’ve put both on the same PostgreSQL instance and let them battle out who gets the assigned RAM by themselves.

I have had one database container for each service, because “if something happens”…

Now I have one container for all databases for postgres, finetuned with PGTune, never regretted it. Important is proper backup, like datadump with pg_dumpall

More performance, less overhead. If you are confident enough and experienced in docker, then use postgres on your host instead of a container. But one container for all databases is okay too.

Some people will disagree and this is fine, but this is my way to manage it. It is not this complicated ☺️

Would it be better to just have one PostgreSQL service running that serves both Nextcloud and Lemmy

Yes, performance and maintenance-wise.

If you’re concerned about database maintenance (can’t remember the last time I had to do this… Once every few years to migrate postgres clusters to the next major version?) bringing down multiple services, setup master-slave replication and be done with it

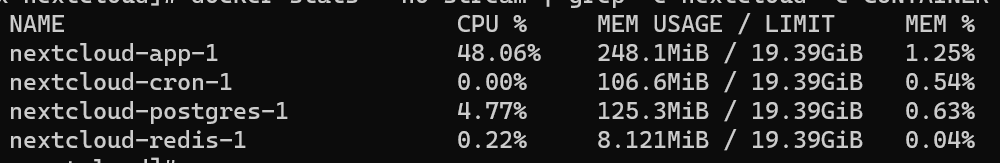

Here’s the

docker statsof my Nextcloud containers (5 users, ~200GB data and a bunch of apps installed):

No DB wiz by a long shot, but my guess is that most of that 125MB is actual data. Other Postgres containers for smaller apps run 30-40MB. Plus the container separation makes it so much easier to stick to a good backup strategy. Wouldn’t want to do it differently.

Postgres doesn’t need that much ram IMO, though it may use as much as you give it. I’d reduce it’s ram and see how performance changes.

If you’re starved for RAM, there’s nothing wrong with a shared instance, as long as you’re aware of the risk of that single instance bringing down multiple services.

I run a three node Proxmox cluster, and two nodes have 80GB RAM each, so my situation is very different to yours. So, I have four Postgres instances:

- Mission critical: pretty much my RADIUS database, for wireless auth and not much else (yet)

- Important: paperless-ngx, and other similarly important services

- Immich: because Immich has a very specific set of Postgres requirements

- Meh: 2 x Sonarr, 3 x Radarr, 1 x Lidarr (not fussed if this instances goes down and takes all of those services with it)

i like to have them separated by service, i have 3/4 dbs running, at least 2 of them are postgreSQL.

deleted by creator

Seperate DB container for each service. Three main reasons: 1) if one service requires special configuration that affects the whole DB container, it won’t cross over to the other service which uses that DB container and potentially cause issues, 2) you can keep the version of one of the DB containers back if there is an incompatibility with a newer version of the DB and one of the services that rely on it, 3) you can rollback the dataset for the DB container in the event of a screwup or bad service (e.g. Lemmy) update without affecting other services. In general, I’d recommend only sharing a DB container if you have special DB tuning in place or if the services which use that DB container are interdependent.